Glide OpenAI Integration: Complete Practical Guide

6 min

read

Learn how to integrate OpenAI with Glide step-by-step, including setup, API connection, AI workflows, use cases, costs, and real limitations.

Glide's native OpenAI integration lets you add real AI functionality to your apps without leaving the editor or managing complex backend infrastructure.

But knowing what it can do, what it cannot do, and how to build with it correctly is what separates a working AI feature from a frustrating prototype that never ships.

This guide covers everything from initial setup through advanced architecture decisions, including the cost, token, and scaling considerations that most tutorials skip entirely.

If you're evaluating whether AI belongs in your app at all, review the practical benefits of Glide AI-powered apps in real business environments.

What Can You Actually Do With Glide OpenAI Integration?

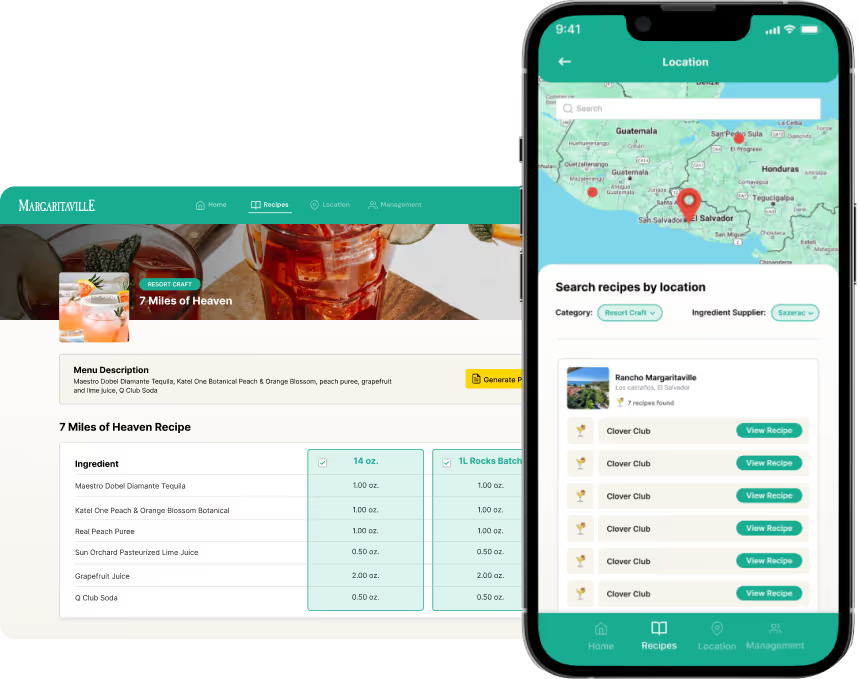

Glide's native OpenAI integration supports text generation, chat with conversation history, question answering from Glide tables, image generation via DALL-E, speech-to-text transcription, and structured output formatting. It covers the majority of practical AI use cases for business apps.

- Generate text from custom prompts: summarize submissions, rewrite content, draft responses, and produce structured outputs from data inputs

- Build chatbots with conversation history: use the Complete Chat with History action to create multi-turn conversation flows that remember prior messages within a session

- Answer questions using Glide tables: the Answer Question About a Table action lets OpenAI query your data and return natural language answers

- Generate images with DALL-E: create AI-generated images from text prompts directly inside a Glide workflow

- Speech-to-text: transcribe audio inputs into text columns using OpenAI's Whisper model

- Text-to-speech: convert text outputs into spoken audio for voice-interface features

- Structured output: use prompt engineering to force JSON-formatted responses that map cleanly to Glide columns and actions

You can also see how these capabilities work in production inside Glide AI features in action.

When Is Glide OpenAI Integration the Right Choice?

Native Glide OpenAI integration is the right choice for lightweight AI features inside internal tools, content generation, AI-powered data enrichment, simple chatbots, and MVP-level AI functionality where speed of implementation matters more than deep model control.

- Lightweight AI features inside internal tools where you need categorization, summarization, or text generation without engineering overhead

- AI summaries and rewriting for operations apps where incoming text data needs to be condensed or reformatted automatically

- Simple chatbot experiences inside business apps where users ask questions and receive generated answers within a session

- AI-powered data enrichment inside Glide tables where every new row benefits from an automatically generated insight, tag, or classification

- MVP-level AI features where you need to validate whether AI adds value to your product before investing in a custom AI architecture

Many of these patterns align with documented Glide use cases across internal tools and automation systems.

When Is Glide OpenAI Integration Not Enough?

Glide's native integration does not support function calling, the OpenAI Assistants API, complex tool orchestration, persistent memory across sessions, or advanced token and context management. These gaps matter for enterprise AI apps and complex pipelines.

- No direct function calling: OpenAI's function calling capability, which lets the model trigger structured tool actions, is not exposed in Glide's native integration

- No Assistants API support: OpenAI's Assistants API with persistent threads, file retrieval, and built-in memory is not available natively inside Glide

- Limited complex tool orchestration: multi-step AI pipelines where the model decides which tools to invoke in sequence require external orchestration via Make, Zapier, or custom backend code

- Token and context size limits apply: large documents, long conversation histories, and data-heavy queries can exceed what Glide's integration handles gracefully

- No advanced memory management: conversation context does not persist automatically across sessions without custom table architecture to store and retrieve it

Knowing these limits before you build is how you avoid hitting them mid-project.

If you're still deciding whether Glide is the right foundation, review the full Glide advantages and disadvantages breakdown before committing.

For projects that require deeper control, compare structured Glide alternatives designed for more complex AI orchestration.

How Do You Connect OpenAI to Glide Step by Step?

Connecting OpenAI to Glide requires enabling the integration in your project settings, adding your OpenAI API key, selecting a model, and configuring your first action. The entire setup takes under ten minutes for a basic text generation workflow.

- Step 1: Enable OpenAI integration in Glide Open your Glide project, navigate to Settings, and find the Integrations section. Select OpenAI from the available integrations list.

- Step 2: Add your OpenAI API key Log into platform.openai.com, navigate to API Keys, and generate a new secret key. Paste this key into Glide's OpenAI integration settings. Your key is stored securely and used for all OpenAI calls from that project.

- Step 3: Choose your model version Glide supports GPT-4o, GPT-4 Turbo, GPT-3.5 Turbo, and other current OpenAI models. GPT-4o offers the best balance of capability and cost for most Glide use cases. Select the model that fits your accuracy and budget requirements.

- Step 4: Set up your first action In a button, form, or workflow, add a new action and select the OpenAI integration. Choose Generate Text for simple prompt-based outputs or Complete Chat with History for conversation flows. Configure your prompt, connecting Glide column values as dynamic inputs.

- Step 5: Test output inside Glide Run the action in the builder's preview mode or on a test device. Check that the output appears in the correct column, that formatting matches your expectations, and that the response time is acceptable for your use case.

If you're building for cross-device access, here's how a Glide mobile app performs in real-world deployments.

How Do You Build a Real AI Chatbot in Glide With Conversation History?

Building a chatbot with history in Glide requires using the Complete Chat with History action, a table to store conversation messages, session ID management to separate user conversations, and a display component to render the chat interface.

- Use the Complete Chat with History action rather than Generate Text. This action accepts a structured array of prior messages and passes them to OpenAI as conversation context

- Create a Messages table with columns for session ID, role (user or assistant), message content, and timestamp. Every message in a conversation is stored as a row

- Assign a session ID when a user starts a conversation. This can be based on the user's email, a generated UID, or a combination of user and timestamp. All messages in that conversation share the same session ID

- When the user submits a new message, your workflow queries all prior messages with that session ID, passes them to the Complete Chat with History action, stores OpenAI's response as a new assistant row, and displays the updated conversation

- For multi-user apps, Row Owners or filters on the session ID ensure each user only sees their own conversation history

- Response formatting matters: prompt OpenAI to respond in plain sentences rather than markdown where markdown rendering is not supported by your display component

If you're unfamiliar with Glide's underlying architecture, understanding how Glide PWAs work helps clarify session and state management constraints.

How Do You Use Glide Tables as an AI Knowledge Base?

The Answer Question About a Table action lets OpenAI read a Glide table and return a natural language answer to a user's question. It works well for small to medium datasets but degrades with very large tables where token limits become a constraint.

- The Answer Question About a Table action passes your table's data to OpenAI along with the user's question and instructs the model to answer based on that data

- Structure your table for clean answers: use clear column names, consistent value formats, and avoid columns with long unstructured text that consume token budget without adding answer quality

- This approach works well for product catalogs, staff directories, policy documents structured as rows, FAQ tables, and inventory data where questions have clear answers in the data

- It starts to fail with very large tables: OpenAI has a context window limit, and tables with thousands of rows or many wide columns will exceed it. Glide sends a sample of the data, not necessarily the complete table, which can produce incomplete or inaccurate answers

- For large datasets, chunk your data into smaller focused tables by category or topic, and route the user's question to the most relevant table using a classification step before calling the Answer Question action

- Always include a fallback in your UI for cases where the model cannot find an answer in the provided data

Before scaling AI over large datasets, review how Glide scalability works in data-heavy environments.

What Are the Prompt Engineering Best Practices for Glide Apps?

Good prompts in Glide apps are specific, structured, and defensive. Request JSON output when the response feeds into columns or logic, set clear system instructions, control response length explicitly, and always include instructions that reduce hallucination risk.

- Always request structured output when the result feeds into Glide columns or actions. Telling OpenAI to respond in JSON with defined keys makes parsing reliable and prevents formatting surprises

- Force JSON explicitly when needed: include an instruction like "Respond only with a JSON object containing the keys: category, priority, summary" and nothing else in your prompt

- Set system instructions clearly: use the system message field to define the model's role, tone, and boundaries before the user message. A support bot should know it is a support bot with defined scope

- Control response length with explicit instructions: "Respond in no more than two sentences" or "Return only the category label, nothing else" prevents verbose responses that overflow display components

- Reduce hallucination risk by grounding the model in provided data: instruct it to answer only based on the information given and to respond with "I don't know" rather than inferring when data is absent

- Use dynamic column values as prompt inputs carefully: validate and sanitize column values before inserting them into prompts to avoid prompt injection from user-submitted data

You can accelerate structured AI workflows using production-ready Glide app templates built for automation use cases.

What Do OpenAI Integration Costs and Token Limits Look Like?

Glide's OpenAI integration bills through your own OpenAI API account, not through Glide's plan. You pay OpenAI per token consumed. Costs vary significantly by model and usage volume, and unmonitored apps can generate unexpected charges.

- Glide plan requirements: the OpenAI integration is available on Glide's paid plans. Check current Glide pricing for the specific plan tier that includes third-party integrations

- OpenAI API billing: costs are charged directly to your OpenAI account based on tokens consumed. GPT-4o costs differ from GPT-3.5 Turbo costs by a significant margin. Check OpenAI's current pricing page for exact per-token rates as these change

- Token consumption basics: every character in your prompt, the table data passed to OpenAI, the conversation history included, and the model's response all consume tokens. Longer prompts and larger data payloads cost more

- Estimating cost per feature: a basic text generation action with a short prompt and brief response might consume 200 to 500 tokens per call. A chat completion with conversation history might consume 1,000 to 3,000 tokens per exchange. A table question on a medium dataset could consume 3,000 to 8,000 tokens per query

- Avoiding runaway charges: set usage limits in your OpenAI account dashboard, monitor usage weekly during early deployment, and avoid triggering AI actions in bulk loops on large datasets without a throttle mechanism

Many production implementations, including structured Glide app examples, manage AI calls strategically to control token consumption.

What Are the Performance and Scalability Considerations?

Glide OpenAI integration works well for moderate usage but faces latency, token, and context window constraints at scale. For high-volume or complex AI pipelines, external automation tools become necessary.

- Token limits cap how much data you can pass to OpenAI in a single call. GPT-4o has a 128,000 token context window, which is large but not unlimited, and large table queries or long conversation histories can approach it

- Context window size affects knowledge base quality: the more conversation history or table data you include, the less room remains for the model's response, and the more the model's attention is divided across the full context

- Latency expectations: GPT-4o calls typically take one to five seconds to return depending on prompt size and response length. For real-time user-facing features, design your UI to handle this wait gracefully with loading states

- Handling large documents: do not pass full documents as a single prompt input. Chunk documents into sections, embed a summary layer, or use an external retrieval system to fetch only relevant sections before querying OpenAI

- When to move to external automation: if your AI logic requires more than two or three sequential steps, involves conditional branching based on AI output, or needs to call OpenAI more than once per user action, Make or Zapier workflows give you better control and observability

This becomes especially relevant when combining AI with enterprise systems like connecting Salesforce to Glide.

How Do You Extend Glide OpenAI Integration With Automation Tools?

Make and Zapier extend Glide's native OpenAI integration by enabling multi-step AI workflows, webhook-triggered logic, access to the OpenAI Assistants API, and hybrid architectures where Glide handles the interface while external tools manage complex AI orchestration.

- When to use Make or Zapier: any AI workflow requiring more than two sequential steps, conditional logic based on AI output, or access to OpenAI features not exposed natively in Glide should be moved to an external automation layer

- Using webhooks for complex logic: Glide can trigger a webhook from a button or workflow action, passing data to Make or Zapier, which handles the multi-step AI processing and writes the result back to a Glide table via API

- Calling the OpenAI Assistants API externally: Make and Zapier both support direct HTTP calls to OpenAI's Assistants API, enabling persistent threads, file retrieval, and memory management that Glide's native integration does not expose

- Hybrid architecture: Glide manages the user interface, form collection, data display, and simple AI calls natively. Complex AI orchestration, retrieval pipelines, and multi-model workflows run in Make or a custom backend, with results returned to Glide tables for display

This architecture gives you the speed of Glide's UI builder combined with the flexibility of purpose-built AI tooling.

What Are the Common Problems and How Do You Fix Them?

The most common Glide OpenAI integration problems are API key errors, empty responses, token exceeded errors, poor output formatting, rate limit hits, and slow response times. Each has a specific cause and a direct fix.

- API key errors: verify the key is copied correctly with no trailing spaces, confirm the key has not expired or been revoked in your OpenAI dashboard, and ensure your OpenAI account has billing enabled and a valid payment method

- Empty responses: check that your prompt is not blank or entirely composed of empty column values. Add fallback text to dynamic inputs so the prompt is always valid even when column values are missing

- Token exceeded errors: reduce the amount of data passed to OpenAI by filtering table rows before the action runs, shortening conversation history to the last five to ten messages, or switching to a model with a larger context window

- Poor formatting: add explicit formatting instructions to your prompt. If the output appears in markdown when you need plain text, instruct the model to respond without markdown formatting

- Rate limits: OpenAI rate limits apply per API key and per model. If you hit them, add delays between bulk AI triggers, upgrade your OpenAI usage tier, or distribute calls across multiple workflows rather than triggering them simultaneously

- Slow responses: long prompts with large data payloads take longer to process. Reduce prompt size, use GPT-3.5 Turbo for latency-sensitive features where GPT-4 accuracy is not required, and add loading states to your UI so users understand a response is incoming

What Are the Real Business Use Cases for Glide OpenAI Integration?

Practical Glide OpenAI applications include AI customer support assistants, internal knowledge base bots, content generation tools, AI data cleaning workflows, and lead qualification systems. These are production use cases, not theoretical ones.

- AI customer support assistant: a Glide app where support staff or customers type questions and receive AI-generated answers based on a product knowledge table, with conversation history maintained per session

- Internal knowledge base bot: employees ask questions about company policies, procedures, or product documentation stored in Glide tables, and OpenAI surfaces relevant answers in natural language. For global teams, you can also translate your Glide apps to any language without rebuilding your AI workflows.

- Content generation for users: a Glide app where users input parameters (topic, tone, length) and receive AI-drafted content (email templates, product descriptions, social posts) directly in the app

- AI data cleaning tool: incoming form submissions or imported records pass through an AI column that standardizes formatting, corrects inconsistencies, and fills in missing structured values automatically

- AI lead qualification system: new leads submitted through a form are automatically scored, categorized by intent level, and assigned a follow-up priority based on their answers, with results written to a CRM table for the sales team

Inventory-heavy operations often combine this with structured systems like a Glide inventory app powered by AI enrichment.

Should You Use Native Glide OpenAI Integration or a Custom AI Setup?

Use native Glide OpenAI integration for MVPs, internal tools, lightweight AI features, and business apps where implementation speed matters.

Move to a custom or hybrid AI setup when you need enterprise AI orchestration, advanced model control, persistent memory, or high-volume reliability.

- Best for MVP and lightweight AI: native integration gets you to a working AI feature in hours. For validating whether AI adds value to your product, this speed advantage is decisive

- Not ideal for enterprise AI orchestration: if your AI requirements include Assistants API threads, fine-tuned models, retrieval-augmented generation, or multi-agent pipelines, design a custom backend from the start

- Ideal for internal business tools: the combination of Glide's interface speed and OpenAI's language capability covers the vast majority of internal tool AI use cases without requiring engineering resources

- When to upgrade architecture: when your AI workflows require more than three sequential steps, your token consumption generates meaningful monthly cost, or your users need persistent AI memory across sessions, it is time to move the AI layer outside of Glide

If you need architectural clarity before scaling, consider consulting experienced Glide experts who design AI-first Glide systems.

Want to Build an AI-Powered Glide App?

If you’re thinking about adding AI to a Glide app, don’t start with features.

Start with the workflow.

AI only creates value when it sits inside real operations. At LowCode Agency, we build AI-powered Glide apps that reduce manual work, improve decisions, and make your system smarter over time.

- AI embedded into real tasks

We connect Glide with AI models to handle summaries, smart suggestions, automated tagging, data classification, or assistant-style responses directly inside your app’s flow. - Structured data before intelligence

AI is only as good as the data it reads. We design clean table architecture, clear relationships, and permission layers so your AI features work reliably and securely. - Automation + AI combined

AI should trigger actions. We connect Glide with Make, Zapier, or custom APIs so insights turn into workflows, notifications, and automated updates without manual steps. - User-focused AI experience

We design simple interfaces that surface AI output clearly. No clutter. No confusion. Your team understands what the AI is doing and when to trust it. - Built to evolve

AI features require iteration. We stay involved after launch to refine prompts, adjust logic, and expand capabilities as your operations grow.

We are not experimenting with AI features for hype. We build AI-powered Glide apps that become part of your daily system.

If you’re ready to move from static tools to an intelligent operational app, let’s build it properly.

Last updated on

April 15, 2026

.

FAQs

Does Glide OpenAI integration require a paid plan?

Can I build a full AI chatbot inside Glide?

What are the token limits in Glide OpenAI integration?

Can Glide OpenAI integration access my app's data automatically?

How much does Glide OpenAI integration cost per month?

When should I use external tools instead of native Glide OpenAI integration?